Claude Code is an AI coding agent by Anthropic that reads code, edits files, and runs commands in the terminal.Documentation Index

Fetch the complete documentation index at: https://docs.ruoli.dev/llms.txt

Use this file to discover all available pages before exploring further.

Installation

Configure with CC Switch (Recommended)

Open CC Switch and add a RuoLi configuration:| Field | Value |

|---|---|

| API URL | https://ruoli.dev |

| API Key | Your Key |

Switch Models

Beyond native Claude models, RuoLi routes to third-party models likegpt-5.5, GLM, Kimi, and DeepSeek. Open CC Switch → Edit Provider → Model Mapping, point each Claude alias at the target model, and save — no JSON editing required.

| Field | Purpose |

|---|---|

| Main Model | The default model Claude Code calls |

| Thinking Model | Used when extended / reasoning mode is on |

| Haiku Default Model | Target model for the haiku alias |

| Sonnet Default Model | Target model for the sonnet alias |

| Opus Default Model | Target model for the opus alias |

Leave these blank if your provider is natively Claude — only fill them in when you want to route aliases to a different model.

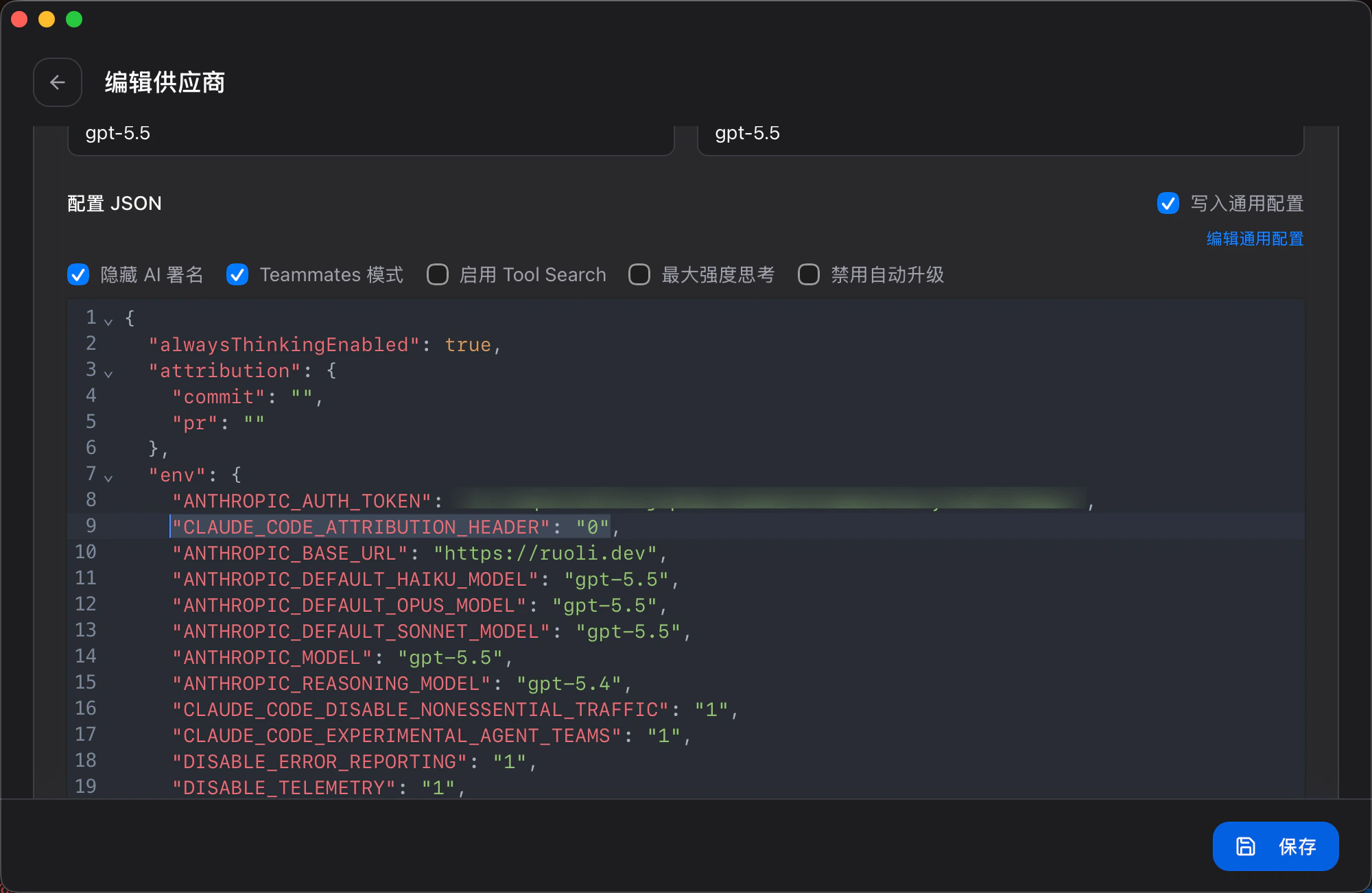

Disable Attribution Header (Required)

In CC Switch → Edit Provider → Config JSON, add one line toenv:

~/.claude/settings.json — see the example below.

Manual Configuration

Edit~/.claude/settings.json:

Configure Context Compression

Claude Code auto-compresses context at about 83% of the context window by default. If you’re using a 1M context window, you can adjust when compression triggers viaCLAUDE_AUTOCOMPACT_PCT_OVERRIDE.

For example, to trigger compression at 180k tokens (180k / 1000k = 18%):

Add to the env block in ~/.claude/settings.json:

| Value | Trigger point (1M window) | Use case |

|---|---|---|

18 | ~180k tokens | Keep context clean frequently |

50 | ~500k tokens | Balance performance and context |

83 | ~830k tokens (default) | Maximize context utilization |

Official Documentation: code.claude.com/docs